- How resilience extends beyond BC/DR to ensure continuous operation at minimum viable service levels

- Why Zero Trust is foundational to reducing risk, limiting blast radius, and enabling rapid recovery

- How to conduct a Business Impact Analysis (BIA) that aligns business priorities with security and operational decisions

- The supply chain and third-party dependencies that are critical factors in enterprise resilience

- How to implement continuous monitoring, testing, and maturity models that enable measurable resilience improvements

Download this Resource

Best For:

Best For:

- Zero Trust Architects & Security Engineers

- Resilience Architects & BC/DR Teams

- Risk Management & GRC Professionals

- Enterprise Architects

- IT & Security Leadership (CXOs, Directors)

Introduction

Resilience is the “ability to remain viable amidst adversity,” defined within 2024 Volume 4 What is Resilience and How Does It Promote Digital Trust (ISACA). Unlike the historical principles behind disaster recovery (DR) and business continuity (BC), where organizations were offline until full operational capability was restored, resilient organizations presume incidents will happen and therefore must work to establish minimal viable service levels (MVSL). Specifically, organizations must define contingency plans to remain operational at or above our MVSLs until full capability is restored.

Zero Trust Guidance for Building a Resilient Enterprise was inspired by a 2024 CSA survey and report sponsored by the Depository Trust & Clearing Corporation (DTCC) titled, “Cyber Resiliency in the Financial Industry 2024.”

Resilience translates into measurable business outcomes—including regulatory defensibility, revenue continuity, competitive differentiation, and optimized investment. Resilience is a strategic advantage, not just a technical function.

Following established Governance, Risk Management, and Compliance (GRC) practices and the Operational Resilience Framework (ORF), we recommend the appointment of an executive accountable to senior leadership and the Board of Directors (BOD) for the organization aiming to meet its resilience objectives. This document uses the term Operational Resilience Executive, following the practice established in the ORF, described as a “qualified executive with the responsibility and authority to ensure appropriate organizational support, implementation, and oversight for operational resilience.”

This paper presumes a basic working knowledge of Zero Trust, and it aims to help practitioners achieve their resilience objectives by leveraging the principles and practices of Zero Trust. With many organizations already on a Zero Trust journey, much of the work may have already been accomplished. The incremental difference could be minimal.

The authors of this paper recognize that each organization is unique, its environment evolves, and organizational change is inevitable. While the paper is primarily for practitioners who are planning, overseeing, and doing the work, senior leadership, board members, regulators, and the like will also find value to help the organization achieve its resilience objectives.

This paper makes extensive use of authoritative sources. This paper benefits from the active engagement by thought leaders, and the authors of the authoritative sources.

This document analyzes the critical role that resilience plays in enabling organizational strength and sustained operations. Resiliency means far more than disaster recovery from operational or IT disruptions, and it extends beyond the traditional boundaries of business continuity planning. Today, resiliency has evolved into a strategic framework for strengthening the entire organization so it may reliably fulfill its purpose. Understanding this expanded role is essential, as resilience has become a key consideration in executive-level decision-making.

Importance of Resilience

Resilience is increasingly important because of the ever-growing complexity of the modern enterprise and the increasingly interconnected world within which every organization resides. Recent disruptions with Amazon Web Services (AWS), Microsoft Azure, and Cloudflare are examples. In response to this growing complexity, legislators and regulators have enacted many new laws and regulations, including the Digital Operational Resilience Act (DORA) (EU Financial), Network and Information Security Directive 2 (EU cross sector), E21, Operational Risk and Resilience (Canada), and the National Resilience Strategy (US).

The importance of resiliency is coming to the forefront for executives around the globe, as shown in articles like, “Why Boards Need to Add Strategic Resilience to the Agenda,” “The Board’s Role in Building Resilience,” and “Harnessing Collaboration to Navigate a Volatile World.”

As resiliency becomes a higher priority in business strategy and goals, it can work to:

- Provide a robust and high integrity supplier of services and products to customers as a competitive advantage

- Influence merger, acquisition, and divestiture strategies

- Make security decisions on the procurement of products and services, including third party providers

- Ensure regulatory compliance

- Oversee access to critical systems, management of policies and user identification, and privilege levels commensurate with Zero Trust best practices

- Ensure that processes are strong and improvements are continually measured

- Ensure costs are planned and comply with agreed plans

Realizing Resilience

The Business Impact Analysis (BIA) framework is used to stack rank priorities, ensure alignment, and set MVSLs to help manage complexity and ensure compliance with legislation and regulation. The outputs of the BIA become the requirements driving strategy, architecture, design, build, test, and operations.

Unlike traditional disciplines like DR, which heavily rely on technology, and BC, which relies heavily on people and process, resilience depends on an active collaboration between people, process, technology, and organizations to meet objectives. Gone are the days when we relied solely on technical controls to prevent incidents. With resilience we focus on the collaboration of people, process, and technical and organizational controls, recognizing that incidents will happen.

At its core, resilience is a new way of viewing and protecting the enterprise. The interconnected nature of the modern world compels us to consider how incidents outside our enterprise affect us and how incidents within our own perimeter impact others.

True resilience goes beyond risk identification and mitigation; it is about preparing for disruption, adapting to change, ensuring continuity of essential services, and collaborating with internal and external stakeholders to recover quickly and maintain trust. It also includes continuous monitoring for new threats, learning from incidents, and continually improving. In building resilience, we seek to identify, eliminate, or at least mitigate:

- Single Points of Failure (SPOF)

- Concentration Risk (risk aggregation)

- Counterparty Risk

- Contagion

- Cascading Risks

Resilience and the Role of Zero Trust

“The art of war teaches us to rely not on the likelihood of the enemy not coming, but on our own readiness to receive him; not on the chance of his not attacking, but rather on the fact that we have made our position unassailable.” - Sun Tzu, The Art of War

Historically, we believed as an industry we could prevent incidents by the deliberate implementation of defenses. The few that got through would easily be addressed by the technical staff or would be covered by insurance (risk transfer). We also believed technical controls were sufficient defenses. After all, it is the digital assets under attack.

That is no longer the case. Incidents happen quickly and too often. Incidents are too disruptive, taking businesses offline for days, weeks, or even months. The impact to the business is too great. Too much to be covered by insurance. The long tail can last years with resulting lawsuits and loss of digital trust lasting years. In some cases, incidents are so damaging to organizations and their supply chains that government intervention or support is required, as seen in the UK with the Jaguar Land Rover (JLR) breach.

Malicious actors understand that many years of implementing technical controls have made these technical controls our strongest defense, so they go around them by attacking the people, the process, and the organizational dimensions. Most agree attacks on the human dimension (social engineering) are key in more than 90% of incidents. Some believe the number is 98%.

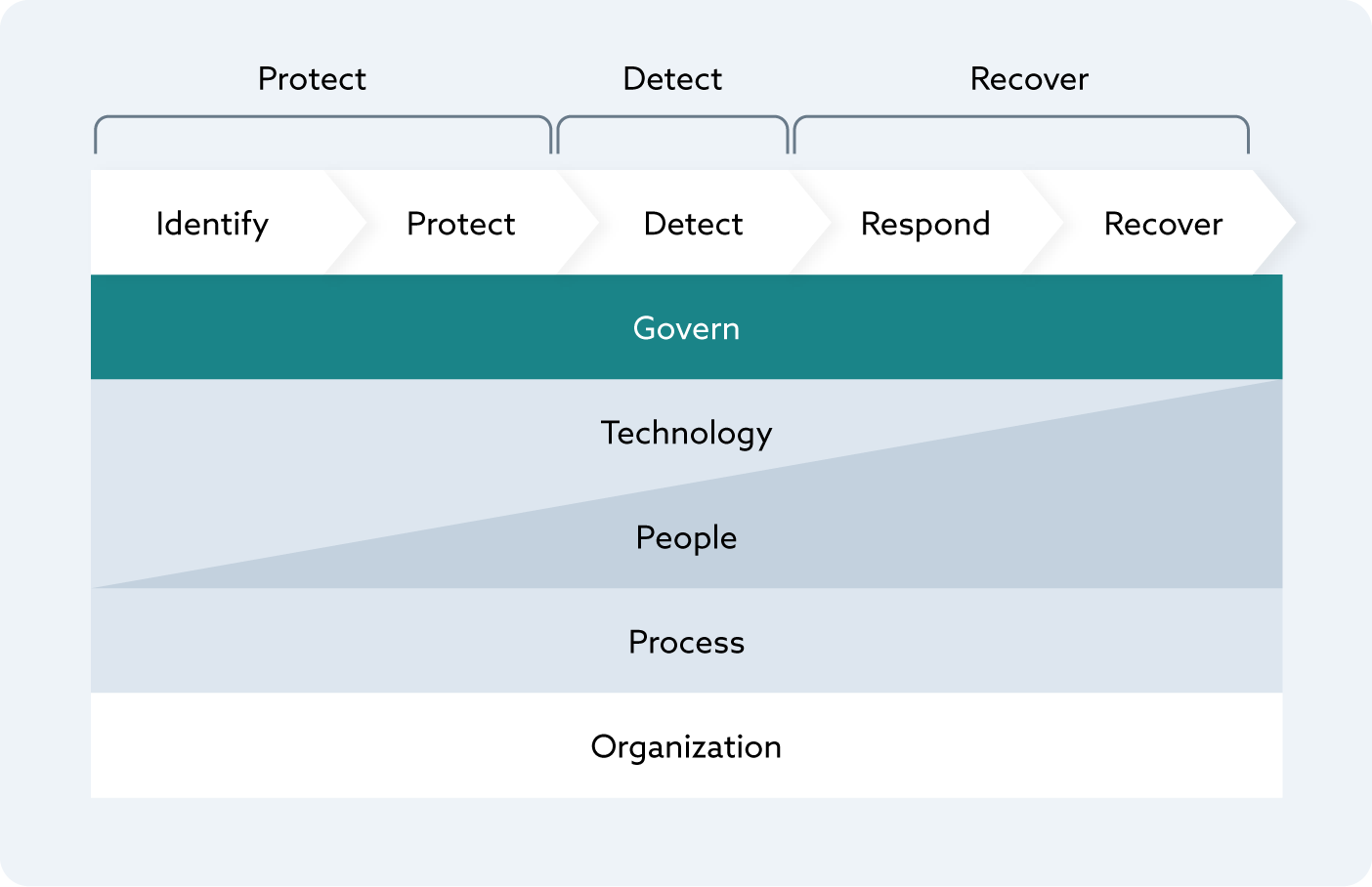

What to do? The solution is simple, but difficult to execute. Instead of just focusing on technical defenses, we focus on the technology, the people, the processes, and the organizational dimensions through the entire cybersecurity lifecycle of protecting, detecting, and recovering. NIST decomposes this into the six functions: Govern, Identify, Protect, Detect, Respond, Recover in the Cybersecurity Framework (CSF). There is no material difference in principle.

Figure 1. The Cyber Life Cycle

Figure 1. The Cyber Life Cycle

This is graphically represented in the 2024 Volume 4 What is Resilience and How Does It Promote Digital Trust (ISACA).

Resilience builds upon this in crucial ways, and no longer can we treat the delivery of products and services as either on or off like we did with BC/DR. Rather we look for ways of remaining viable amidst adversity. The military refers to this as “mission assurance.” Instead of being on or off, alive or dead, we operate above the MVSL until we can fully restore.

An easily understandable example is a municipal water supply taken offline by a cyber incident. If your objective is that no more than 10k constituents cannot be without fresh water for more than 8 hours, emergency services and hospitals cannot be without fresh water for more than 2 hours, and commercial buildings cannot be without fresh water for more than 4 hours, the MVSL may be achieved by implementing alternative strategies to remain viable until full operational capability is restored.

To remain viable, the main strategy should be supplemented by alternative strategies. Alternatives allow the organization to deliver the services but at a degraded capacity. After 2 hours, municipal water supplies may be insufficient for firefighting operations. The fire department has alternatives. They staff, train, and equip their organization to use alternative water sources (e.g., lakes, rivers) to perform hose relays and tanker operations. Hospitals have water supply contracts in place with vendors that will provide water deliveries to storage tanks on site. Cities plan to distribute bottled water to communities until municipal water is restored.

Use the Business Impact Analysis (BIA) to increase alignment between the business strategy, the security architecture, and operations. Resilience takes alignment and an understanding of dependencies. Let’s face it, nobody has unlimited resources and not all services are created equal. Identifying the high risk areas and knowing our priorities help to focus our attention and determine the best allocation of resources.

Focus on external dependencies. Our world is more interconnected than ever. We rely on Cloud Service Providers (CSP), Managed Service Providers (MSP), and Managed Security Service Providers (MSSP). Historically, our plans focused heavily on our internal systems, internal assets, and internal dependencies. To be successful, we have now expanded our plan to look at external dependencies intricately. Think about what services you cannot deliver to your customers if your CSP or SaaS provider experiences an outage. Verizon’s 2025 Data Breach Investigation Report (DBIR) shows 30% of incidents are the results of a third party. That is doubled from the previous year. Other sources, like Security Scorecard and Marsh, believe the percentage is almost twice as much.

Zero Trust is a critical part of resiliency. The guiding principles for planning, implementing, and operating Zero Trust align to the concept of resilience. These guiding principles are:

- Begin with the End in Mind (Business and Mission Objectives)

- Do Not Overcomplicate

- Products are Not the Priority

- Access is a Deliberate Act

- Inside Out, not Outside In

- Breaches Happen

- Understand Your Risk Appetite

- Ensure the Tone from the Top

- Instill a Zero Trust Culture

- Start Small and Focus on Quick Wins

- Continuously Monitor

The same principles that guide Zero Trust’s implementation also guide resilience efforts. The foundational concepts of always verifying identity and access controls are essential to building resilience. Both Zero Trust and resilience employ techniques to reduce the blast radius, thereby reducing the impact and fostering faster recovery. Both employ techniques to continuously monitor. Support at a senior level is imperative for both Zero Trust and resilience.

Resilience Definitions

“It is not the strongest of the species that survive, nor the most intelligent, but the one most responsive to change.” - Leon C. Megginson, Professor of Management and Marketing at Louisiana State University at Baton Rouge (paraphrasing Charles Darwin)

Resilience and Zero Trust presume bad things will happen and emphasize preparation. Rather than focusing all efforts to avoid bad things, our objective is to prepare for when they happen. We make it difficult for an attacker to achieve their goals after a breach by limiting the blast radius when incidents occur; preparing people, processes, and technology to respond and recover quickly; and learning from the experience to make us stronger. These concepts are reflected in the definitions from major standards bodies.

NIST SP 800-39 Managing Information Security Risk Organization, Mission, and Information System View defines resilience as “[t]he ability to prepare for and adapt to changing conditions and withstand and recover rapidly from disruption.”

Similarly, ISO defines organizational resilience in ISO/DIS 22316 Security and Resilience as “the ability of an organization to respond and adapt to change. Resilience enables organizations to anticipate and respond to threats and opportunities, arising from sudden or gradual changes in their internal and external context.”

Canada defines operational resilience as “the ability to deliver operations, especially critical operations, through disruption.”

The most complete and useful definition for the practitioner that aligns with the principals of Zero Trust is from 2024 Volume 4 What is Resilience and How Does It Promote Digital Trust (ISACA): “Simply put, resilience is about remaining viable amidst adversity and being better for it. That means aligning technology strategy with business strategy and operations. It means moving away from a strategy of continually layering controls to mitigate cyber risk to a strategy where we consider different forms of risk treatments with an eye toward a collaboration among technology, people, processes, and the organization.”

Resilience Is More than Business Continuity and Disaster Recovery

Business Continuity and Disaster Recovery (BC/DR) began when business operations were not as dependent on technology as they are today. BC/DR also began when organizations were still relatively stand-alone. Today, most organizations are highly interconnected.

Resilience extends the scope outside of the four walls of the organization to External Service Providers (ESP), vendors, and supply chains. Resilience is about the collaboration of people, process, technology, and organization across the full cyber lifecycle—protect, detect, and recover. NIST decomposes protection into two phases and recovery into two additional phases, as part of the Cybersecurity Framework (CSF).

Disaster Recovery (DR) is the recovery of IT after an incident. DR focuses on technology. Business Continuity (BC) is the recovery of the business activities after an incident. BC focuses on the people and process. DR and BC are separate activities, and BC cannot be completed until DR has restored the technology. Both DR and BC are activated after an incident and are black or white. That is to say, the technology and the business are either on or off. Resilience combines DR and BC to extend them beyond the enterprise.

Traditionally, BC/DR looks at an organization’s enterprise with little regard for the organization’s role in the ecosystem. In the world of resilience, we are attuned to how a disruption at a supplier can ripple through the ecosystem, impact us, and those we are connected to. For example, if a Cloud Service Provider (CSP) is offline, it can adversely impact our ability to deliver products and services to others. Verizon’s 2025 DBIR shows the percentage of incidents from third parties doubled from 2023 to 2024.

From the perspective of Zero Trust, resilience primarily involves prioritizing strong Identity and Access Management (IAM), followed by efforts to reduce the blast radius of a breach. It is important for us to reduce the blast radius of any incident from a third party in much the same way we use segmentation and micro-segmentation on an internal network. We cannot have an incident at a third party impact our ability to deliver products and services, and we should not negatively impact those to whom we are connected. It is incumbent on every member in an ecosystem to avoid contagion and to reduce the spread. Identifying the interconnections between components and what they rely on is critical. For example, if an application is restored but it cannot be accessed because the IdAM is offline, you cannot deliver your products and services. Large organizations often have networks dedicated to backing up systems. If that network is not online, you will not be able to recover after an incident.

How we measure success changes when it comes to resilience. Resilience prioritizes business activities and establishes acceptable levels of service through a Business Impact Analysis (BIA). (See the Role of the Business Impact Analysis (BIA) section.) With BC/DR, measurable goals like recovery point objectives (RPO), recovery time objectives (RTO), impaired state objectives, and minimal viable service levels (MVSLs) are crucial. (See the Metrics and Indicators section.) Once we know what is important and what the minimal acceptable levels are, we can identify the people, the process, the technology, and the organizational components that cooperate to provide products and services.

Cyber Resilience Maturity Models

Within the Zero Trust approach, there exist two maturity models and a resilience maturity curve. The philosophies behind each are aligned, but the mechanics are different.

-

The Global Resilience Federation’s (GRF) Resilience Maturity Model complements the Operational Resilience Framework (ORF) to assess an organization’s operational resilience progress and readiness. It provides a spreadsheet tool, aligned with NIST and ISO controls, that helps organizations understand their current operational resilience, identify gaps, and plan for improvements to minimize service disruptions during events by focusing on data recovery and service provision

-

The Cyber Resilience Capability Maturity Model (CR-CMM) helps organizations assess and improve their operational resilience. The CR-CMM is independent of legislation, regulation, standards, and frameworks. The primary goal of the CR-CMM is to provide a structured approach to assess an organization’s current cyber resilience maturity and prioritize areas for improvement

-

The Cybersecurity Capability Maturity Model (C2M2) is a framework developed by the U.S. Department of Energy (DOE) to assess and enhance an organization’s cybersecurity posture for both information technology (IT) and operational technology (OT). The model uses 356 practices across 12 domains.

Operational Resilience Framework Maturity Model

Traditional BC/DR focuses on data recovery with little regard for providing services during a disruption. The GRF Business Resilience Council (BRC) launched a multi-sector working group in 2021 to take on this challenge. The Operational Resilience Framework (ORF) was developed for organizations to withstand, recover from, and adapt to cyberattacks as well as natural and accidental disruptions. The primary goal is to reduce operational risk, minimize service disruptions, and limit systemic impacts from destructive attacks and adverse events.

The framework provides rules and implementation aids that support a company’s recovery of immutable data, while also uniquely allowing it to minimize service disruptions in the face of destructive attacks and events.

The ORF was developed to be broadly applicable and is aligned with existing controls like those from NIST and ISO. Available resources include the following:

-

ORF Rules: Overview of all components of the ORF targeted to practitioners including information on the steps, rules, terminology, implementation aids, and future activities

-

ORF Rules and Maturity Model (spreadsheet): A spreadsheet containing the ORF v2 rules and maturity model to serve as a vital tool for organizations to assess their operational resiliency progress and readiness. Also includes a mapping of ORF Rules to associated NIST 800-53 and ISO 27001 controls

-

ORF Glossary (spreadsheet): A list of common terms and definitions used within the ORF

Learn more at https://www.grf.org/orf.

Cyber Resilience Capability Maturity Model (CR-CMM)

The CR-CMM helps organizations measure, benchmark, and enhance their resilience across ten key domains. The CR-CMM is a community-driven practical tool inspired by the famous SOC-CMM and aligned with NIST SP 800-160, the MITRE Cyber Resiliency Engineering Framework, and other best-in-class frameworks (e.g., ORF, Sheltered Harbor, CTI-CMM). While being sector- and size-agnostic, the CR-CMM aligns with industry best practices and draws from widely recognized frameworks maintained by organizations such as NIST and MITRE.

The maturity levels range from initial (where resilience practices are reactive and uncoordinated) to optimized (where resilience is proactive, integrated into all aspects of system design, and supported by continuous improvement).

Achieving true cyber resilience requires a structured, measurable approach and accountable leadership to continuously drive the awareness and improvement of a cyber resilient posture. Like Zero Trust, cyber resilience is an overused term that means different things to different people—whether in the industry or among regulators. This lack of clarity makes it harder to define what true cyber resilience capabilities are and to choose the right set and scale of capabilities for an organization.

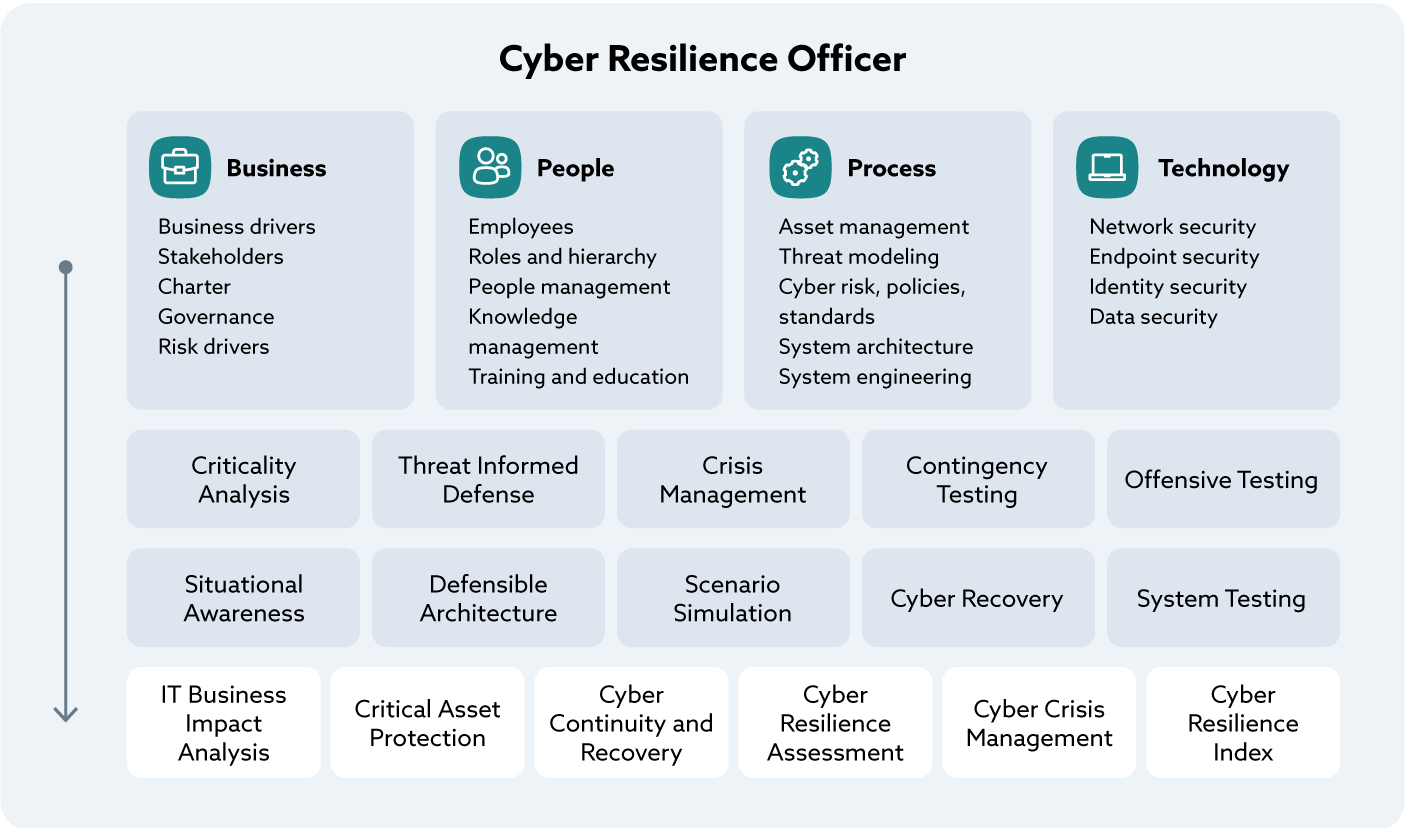

An organization’s cyber resilience efforts primarily aim to ensure the survivability of mission-critical functions before, during, or after a coordinated, destructive cyberattack. Such cyber resilience efforts must address the continuously evolving risks from advanced and unpredictable adversaries. See figure below “Cyber Resilience Officer.”

Figure 2. Cyber Resilience Officer

Figure 2. Cyber Resilience Officer

Zero Trust and CR-CMM: When “Never Trust, Always Verify” Meets “Withstand and Adapt”

CR-CMM helps organizations put strategies like Zero Trust into practice by connecting high-level principles with concrete, measurable capabilities. Take, for example, the interplay between criticality analysis, situational awareness, and defensible architecture.

A Zero Trust journey begins by asking a deceptively simple question: what matters most? Not every system or dataset requires the same level of scrutiny. Through criticality analysis, the CR-CMM guides organizations to identify which assets, applications, and processes are genuinely mission-critical. This focus ensures that protection efforts are not diluted across the entire IT estate but are directed toward the “crown jewels” that must be defended at all costs.

Once those priorities are set, resilience depends on the ability to see and interpret what is happening around them. Situational awareness provides this visibility. It equips teams with the capability to continuously monitor identities, devices, and sessions, aligning with Zero Trust’s principle that authentication and authorization must be dynamic. By embedding this practice into the maturity model, CR-CMM ensures that Zero Trust monitoring is not an isolated control but part of a broader cycle of anticipation, detection, and adaptation.

Finally, defensible architecture brings these ideas into the design of the systems themselves. Zero Trust is not simply about blocking or denying—it is about building infrastructures that can adapt under stress, limit the attacker’s freedom of movement, and preserve essential functions even in adverse conditions. CR-CMM captures this through the emphasis on layered defense, diversity of controls, and the ability to reposition resources dynamically.

Seen together, these three practices illustrate how the model transforms Zero Trust from a principle into a living strategy. By clarifying what is critical, ensuring constant awareness, and embedding resilience into architecture, the CR-CMM provides a structured pathway for organizations to uplift their cyber resilience posture in line with Zero Trust thinking.

Guiding the Cyber Resilience Officers with CR-CMM

The CR-CMM is a powerful tool for organizations seeking to strengthen their cyber resilience posture. By applying it on a regular basis (e.g., bi-annually, annually), companies are able to evaluate and benchmark their current maturity against an established baseline, gaining a clear picture of where they stand today. This understanding naturally feeds into the development of a strategic roadmap, helping leaders identify which resilience practices to enhance and how to advance maturity over time.

Beyond measurement, the model also plays a role in guiding investment and informing strategic initiatives. It helps decision-makers prioritize resources where they matter most, ensuring that each step taken contributes directly to a stronger resilience posture. Just as importantly, it creates a common language for talking about cyber resilience—one that bridges the gap between technical teams, business processes, and executive priorities.

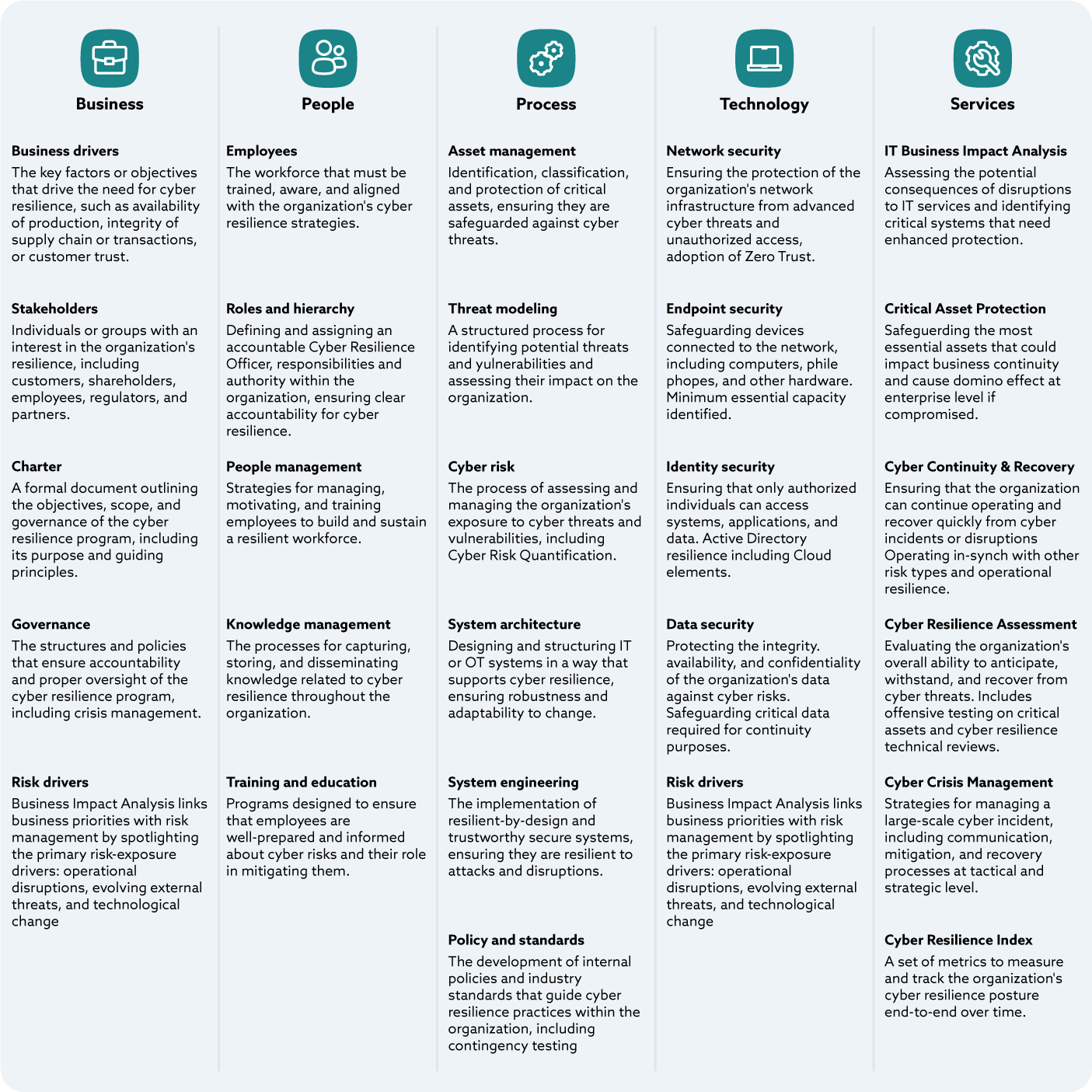

Figure 2 entitled CR-CMM Logical Architecture illustrates how four foundational enablers (Business, People, Process, Technology) support the development of cyber resilience capabilities Together they allow a Cyber Resilience Officer team to deliver six essential resilience services to the organization at the bottom of the illustration (IT Business Impact Analysis, Critical Asset Protection, Cyber Continuity and Recover, Cyber Resilience Assessment, Cyber Crisis Management, Cyber Resilience Index).

Figure 3. CR-CMM Logical Architecture

Figure 3. CR-CMM Logical Architecture

In practice, this common framework encourages collaboration across functions that often work in silos, such as cybersecurity, business continuity, IT operations, and risk management. By aligning their efforts under a unified strategy, these groups can drive more consistent and sustainable outcomes. The CR-CMM also facilitates standardized knowledge sharing between organizations, allowing them to compare progress, exchange insights, and build on shared practices. Over time, this collective approach strengthens not only individual companies but the wider ecosystem, reinforcing cyber resilience as a shared responsibility.

Learn more about the Cyber Resilience Capability Maturity Model at www.cr-cmm.org.

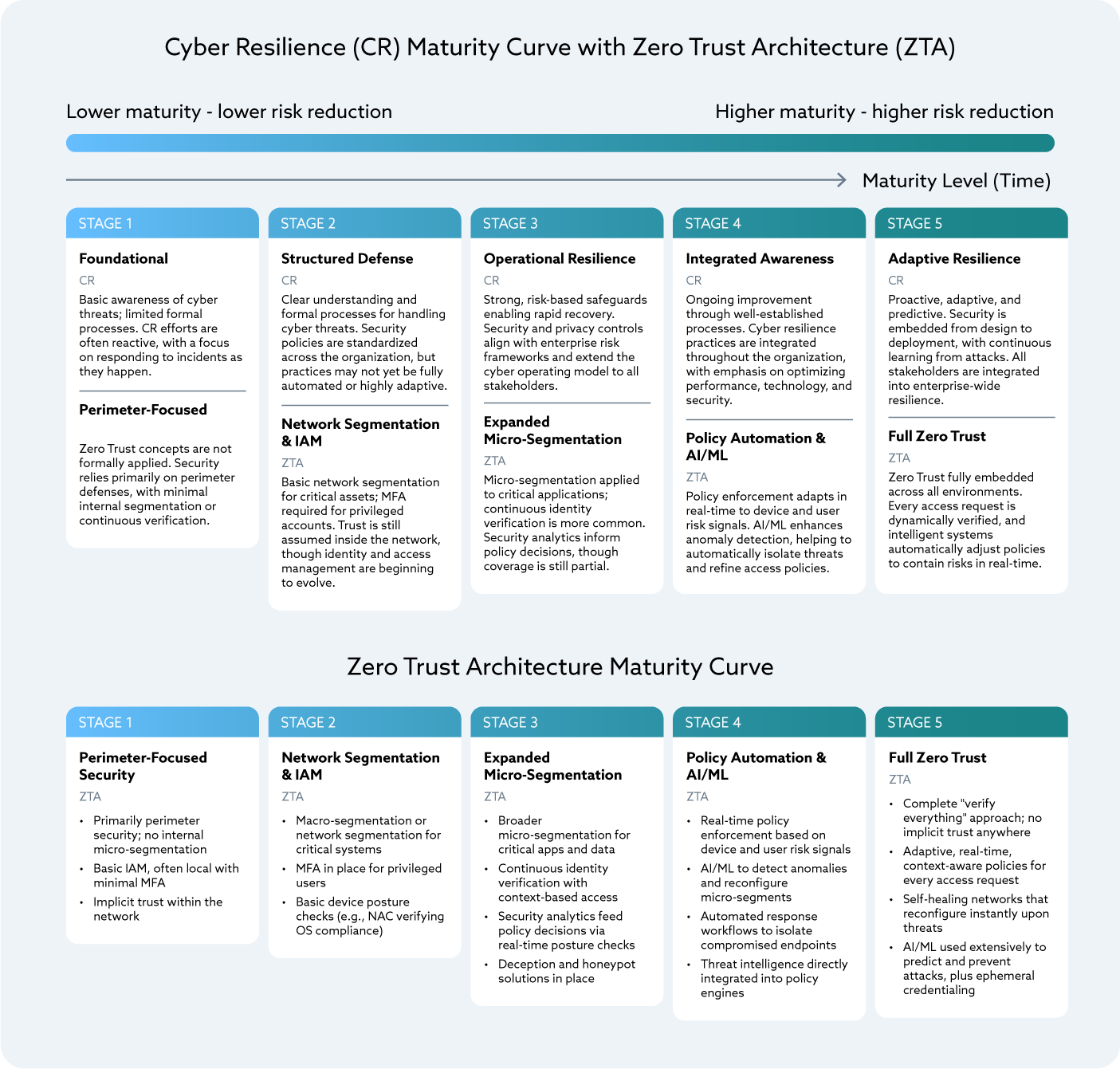

Cyber Resilience Maturity Curve Integrated with Zero Trust Architecture: A Framework for Financial Institutions

Cyber resilience marks an exciting evolution in security strategy. Rather than focusing on preventing every potential incident, it champions the idea of maintaining business continuity, even amidst unavoidable disruptions. Financial institutions, entrusted with vital market infrastructure and sensitive customer information, are feeling the pressure from regulators to embrace resilience-first approaches. Enter Zero Trust Architecture (ZTA) as a foundation in cyber resilience. ZTA operates on the principle of no implicit trust, inside or outside the network.

The Cyber Resilience Maturity Curve with ZTA provides a clear roadmap for financial institutions to evaluate their current security measures, plan future investments, and demonstrate compliance with regulatory standards. Each stage of the curve enhances risk management and also aligns seamlessly with established regulatory frameworks like the NIST Cybersecurity Framework, ISO/IEC 27001, and the Basel Committee’s Principles for Operational Resilience.

The Five Stages of the Maturity Curve and Compliance Relationship

1. Foundational Awareness

-

Description: Institutions are primarily reactive. Cybersecurity relies heavily on perimeter defenses with limited segmentation and ad-hoc incident handling

-

ZTA Capability: Minimal. Trust is implicit within the network, and there is no continuous verification

- Regulatory Alignment:

- NIST CSF: Identify (ID) — partial alignment through asset awareness

- ISO 27001: Control A.5.1 (Policies for information security)

- Basel Principles: Low alignment; gaps in risk management expectations

- DORA: Initial ICT risk identification (Article. 6)

2. Structured Defense

-

Description: The organization begins to formalize cybersecurity controls through standardized policies, centralized IAM, and baseline segmentation. Defensive capabilities are more consistent, but enforcement remains largely static and manual. Security is still treated as a control function, not a business resilience enabler. Cloud access is mediated through MFA and IAM, yet trust decisions do not adapt dynamically to context, behavior, or risk. Incident response and recovery planning remain siloed

-

ZTA Capability: Basic application of segmentation and authentication for privileged accounts

- Regulatory Alignment:

- NIST CSF: Protect (PR) — particularly PR.AC (Access Control) and PR.AU (Awareness and Training)

- ISO 27001: A.9 (Access control), A.10 (Cryptography)

- Basel Principles: Early demonstration of resilience planning, but largely tactical

- DORA: Protection and prevention measures (Article. 9)

3. Operational Resilience

-

Description: Cybersecurity evolves into a resilience capability aligned with business objectives. Critical business services, supporting systems, and dependencies are identified and protected. Micro-segmentation, continuous monitoring, and conditional access policies are introduced to reduce attack surfaces and limit blast radius. Analytics begin guiding policy enforcement. Decisions are increasingly guided by telemetry and analytics. Incident response and recovery processes focus on maintaining service availability rather than system-level recovery alone

-

ZTA Capability: Expanded micro-segmentation and conditional access based on identity

- Regulatory Alignment:

- NIST CSF: Detect (DE) and Respond (RS) — DE.CM (Security Continuous Monitoring), RS.MI (Mitigation)

- ISO 27001: A.12 (Operations security), A.16 (Incident management)

- Basel Principles: Principles 2–3 (Governance and operational resilience framework)

- DORA: Recovery and Response (Article. 11)

4. Integrated Assurance

-

Description: Security practices and resilience are embedded across enterprise functions, including application development, cloud platforms, third-party ecosystems, and business operations. Security controls continuously adapt based on risk signals, using automation and AI-driven analytics. Policy enforcement becomes dynamic and evidence based, supported by AI/ML for anomaly detection and automated response. Control effectiveness, not just control presence, is demonstrable. Zero Trust principles are deeply integrated into CI/CD pipelines, infrastructure-as-code, policy-as-code, and cloud-native architectures

-

ZTA Capability: Adaptive controls, real-time policy automation, automated threat isolation

- Regulatory Alignment:

- NIST CSF: Recover (RC) — RC.IM (Improvements), RC.CO (Communications)

- ISO 27001: A.14 (System acquisition, development and maintenance), A.18 (Compliance)

- Basel Principles: Principle 5 (Operational resilience embedded into business processes)

- DORA: Learning and Evolving (Article 13)

5. Adaptive Resilience

-

Description: Security is predictive and proactive. Zero Trust is fully institutionalized across all environments (on-prem, cloud, SaaS, and third-party environments), continuously validated in real-time. Stakeholders—including regulators, customers, and third parties—are integrated into the enterprise-wide model. Security and resilience decisions continuously adjust in real time based on evolving threats, business priorities, and ecosystem dependencies. Testing, validation, and assurance are continuous. Resilience metrics are shared with regulators, partners, and customers when appropriate. The organization demonstrates the ability not only to withstand disruptions but to adapt and improve through them

-

ZTA Capability: Full Zero Trust adoption. Every access request is dynamically verified; intelligent systems continuously refine defenses; security is embedded into every layer of the organization’s infrastructure

- Regulatory Alignment:

- NIST CSF: Full integration across Identify–Protect–Detect–Respond–Recover

- ISO 27001: Full enterprise-wide coverage of Annex A controls, with continuous improvement cycles

- Basel Principles: Principle 7 (Testing operational resilience), Principle 9 (Managing interconnections with third parties)

- DORA: Advanced testing of ICT tools, systems, and processes based on TLPT (Article 26)

Practical Benefits for Financial Institutions

-

Benchmarking Current State: Institutions can assess whether they remain reactive (Stages 1–2) or have progressed toward integrated resilience (Stages 3–5)

-

Targeted Investment: By mapping specific ZTA capabilities to compliance frameworks, organizations can prioritize investments that both reduce risk and satisfy regulatory audits

-

Demonstrating Compliance: Regulators increasingly expect evidence of resilience testing and maturity progression

-

Third-Party Risk Integration: Especially relevant in banking ecosystems with vendor reliance, Stages 4 and 5 demonstrate alignment with supervisory expectations on outsourcing and supply chain resilience

For financial institutions, cyber resilience is both a security imperative and a regulatory requirement. The CR Maturity Curve integrated with ZTA offers a pragmatic roadmap to strengthen defenses, align with global compliance frameworks, and sustain operations even during high-impact cyber events. Progression along the curve not only demonstrates reduced risk exposure but also positions institutions as leaders in governance, trust, and systemic stability.

Visual Representation

Figure 4. Cyber Resilience and Zero Trust Maturity

Figure 4. Cyber Resilience and Zero Trust Maturity

Table 1 illustrates the relationship between Maturity Levels, Zero Trust Capabilities and Regulatory Alignment.

Maturity Curve with Regulatory

| Stage | Description | ZTA Capability | Regulatory Alignment |

|---|---|---|---|

| 1. Foundational Awareness | Reactive, perimeter-based, limited processes | Minimal ZTA; implicit trust | NIST CSF ID; ISO A.5.1; Basel low alignment; DORA Article 6 |

| 2. Structured Defense | Formal policies, segmentation, MFA | Basic segmentation, privileged IAM | NIST PR.AC/PR.AU; ISO A.9/A.10; Basel tactical alignment; DORA Article 9 |

| 3. Operational Resilience | Recovery, micro-segmentation, analytics | Expanded micro-segmentation, continuous verification | NIST DE.CM, RS.MI; ISO A.12/A.16; Basel governance; DORA Article 11 |

| 4. Integrated Assurance | Embedded resilience, automation, AI/ML | Adaptive controls, real-time policy automation | NIST RC.IM/RC.CO; ISO A.14/A.18; Basel integration into business processes; DORA Article 13 |

| 5. Adaptive Resilience | Predictive, proactive, full Zero Trust | Full dynamic verification, intelligent refinement | Full NIST CSF; ISO Annex A; Basel principles on testing and third-party resilience; DORA Article 26 |

Table 1.

Legislation, Regulations, Frameworks, Standards

Landscape

When it comes to resilience, there is a mosaic of legislation, regulations, frameworks, and standards. Some are focused on jurisdiction, others are sectoral, while others are horizontal across geographies and sectors. The most significant standards and frameworks include:

- ISO 22316:2017 Security and resilience—Organizational resilience—Principles and attributes

- NIST SP 800-160 Vol. 2, Revision 1, Developing Cyber-Resilient Systems: A Systems Security Engineering Approach

- Business Resilience Council (BRC), Operational Resilience Framework (ORF)

Legislation

Resilience is a key element of the U.S. National Cybersecurity Strategy issued by the White House in 2023. A series of Executive Orders (EOs), legislation, and regulations have been subsequently issued. The National Resilience Strategy was issued in 2025. Other resilience-related initiatives include:

-

The U.S. President’s Council of Advisors on Science and Technology (PCAST) is a federal advisory committee appointed by the President to augment the science and technology advice available to him from inside the White House and from the federal agencies. In February 2024, PCAST issued a report, “Strategy for Cyber-Physical Resilience: Fortifying Our Critical Infrastructure for a Digital World.” The PCAST report is driving resilience within the United States and, to a lesser extent, U.S. allies. The PCAST report is silent on use of standards while adopting many of the key elements and rules from the GRF ORF

-

Digital Operational Resilience Act (DORA) is a European Union (EU) regulation that requires financial entities to improve their operational resilience. The regulation is designed to standardize and strengthen the information and communication technology (ICT) security and operational resilience of the financial sector in the EU and serving customers in the EU. DORA imposes requirements on both the entity, their supply chain, and the infrastructure components that serve them (e.g., the cloud). DORA builds upon ISO 27001 (Information Security), ISO 3100 (Risk Management), ISO 22316 (Resilience), and ISO 22336 (Resilience implementation)

-

Network and Information Systems Directive 2022/0383 (NIS2) is an EU regulation designed to raise the cyber hygiene of entities within the EU and those that serve EU residents. Like DORA, NIS2 is not sector specific. Like DORA, NIS2 imposes obligations on the entity, their supply chain, and the infrastructure components that serve them (e.g., the cloud). NIS2 builds upon ISO 27001 (Information Security), ISO 3100 (Risk Management), ISO 22316 (Resilience), and ISO 22336 (Resilience implementation)

-

Cyber Resilience Act (CRA) is an EU regulation that supports other cyber and resilience efforts by imposing requirements on products with digital elements. CRA builds upon ISO 27001 (Information Security), ISO 3100 (Risk Management), ISO 22316 (Resilience), ISO 22336 (Resilience implementation)

-

Office of the Superintendent of Financial Institutions (OFSI), Canada Resilience (E21) is the equivalent of DORA for Canada. E21 imposes obligations on the finance sector operating in Canada or service clients in Canada. E21 builds upon ISO 27001 (Information Security), ISO 3100 (Risk Management), ISO 22316 (Resilience), ISO 22336 (Resilience implementation)

-

India’s Cybersecurity and Cyber Resilience Framework (CSCRF) for SEBI Regulated Entities (REs) is a set of standards and guidelines established by the Securities and Exchange Board of India (SEBI) to enhance cybersecurity for entities they regulate

- PRA / FCA Operational Resilience is a set of final rules and guidance on new requirements to strengthen operational resilience in the financial services sector from the Financial Conduct Authority (FCA) and the Prudential Regulation Authority (PRA)

-

European Critical Entity Resilience (CER) is a directive from the European Parliament and the Council of the European Union

- HKMA Cyber Resilience Assessment Framework (C-RAF) is part of the HKMA Cybersecurity Fortification Initiative

Control Matrices

Control matrices are important for compliance and general security. While not sufficient for achieving resilience, control matrices play a necessary role. Frameworks, like the ORF, include mappings to multiple control matrices.

The Cloud Controls Matrix (CCM) developed by the Cloud Security Alliance (CSA) contains a Business Continuity Management and Operational Resilience (BCR) domain. The BCR domain contains eleven control specifications. In addition to the controls and the implementation guidelines, the BCR domain includes vendor/service-specific Shared Security Responsibility Model (SSRM) guidance in CAIQ responses from the CSPs. Learn more about the CCM with these resources from CSA:

- CCM Video Series: BCR - Business Continuity Mgmt & Op Resilience

- NIST SP 800-53 Rev. 5 Security and Privacy Controls for Information Systems and Organizations (SP 800-53 Rev 5.2.0) was issued as a special release on August 27, 2025. Among other things, the incremental release includes a control dedicated to resilience, SA-24, “Design for Cyber Resiliency”

- Mitre Enterprise Candidate Mitigations which represent security concepts and classes of technologies that can be used to prevent a technique or sub-technique from being successfully executed

Collective Resilience

Collective resilience is the coordinated ability of organizations operating in an interdependent ecosystem (e.g., enterprises, vendors, suppliers, partners, platforms) to sustain Minimum Viable Service Levels (MVSLs) under stress, operate in predefined impaired states, and recover quickly in ways that limit systemic impact to others. Resilience is therefore an ecosystem property, not just an enterprise property—it extends beyond a single organization to the end-to-end ecosystem that actually delivers the service. Within the Operational Resilience Framework (ORF) from the Business Resilience Council (BRC), this begins by understanding your role in the ecosystem: identify and prioritize stakeholder groups, classify services (e.g., Operations-Critical, Business-Critical), and establish the targets that must be met when conditions are degraded.

Traditional collective defense concentrates on shared situational awareness and coordinated containment so each member can better prevent or limit incidents. Collective resilience becomes clearer when viewed through real-world interdependency and failures. For example, a CSP regional outage can create immediate, multi-tenant disruption, preventing numerous organizations from accessing critical SaaS platforms—even when their internal systems remain healthy. The outages experienced with Azure, AWS, and CloudFlare in late 2025 are examples.

In the software supply chain, malicious code injected into a single supplier may corrupt build pipelines or introduce compromised artifacts, affecting thousands of downstream enterprises as the malicious code is carried through the software distribution network. SolarWinds, Codecov, Log4j, MoveIT are well-known examples.

Disruption in payment ecosystems can paralyze operations across banks, merchants, and service providers, revealing how interconnected operations truly are. These scenarios highlight that resilience cannot be achieved in isolation. Resilience requires shared situational awareness, aligned expectations, and coordinated recovery mechanisms across the wider ecosystem.

Collective resilience is the next step—turning shared defense into shared delivery. Parties work together to ensure Operations-Critical services continue through a crisis, even when one or more participants are impaired. The emphasis shifts from only detecting and blocking to assuring service outcomes across organizations. A key consideration for this is ensuring that communication between organizations is resilient to enable this collaborative approach.

Because no organization operates alone, we must iteratively work with vendors, suppliers, and partners to co-develop resilient outcomes. Participants jointly identify cross-firm service dependencies, classify and prioritize affected services, and codify MVSLs and Service Delivery Objectives (SDOs) in plans, controls, and testing. Critical data sets are protected for confidentiality, integrity, and availability (CIA) (including effectively immutable storage and multiple-authorization deletion), recovery environments are provisioned and maintained, and independent evaluation and exercises verify that impaired-state operation can be established and sustained. Crucially, this includes coordinated, multi-party testing—tabletop, technical, and chaos-style exercises—so that dependencies, failovers, and communications are proven across the ecosystem, not just within a single enterprise.

Zero Trust provides the control language for making this real across organizational boundaries: assume breach, least privilege, and explicit verification so that collaboration does not expand the blast radius. Two guiding principles are especially relevant here: access is a deliberate act (all cross-organizational access, human or non-human, is intentional, time-bounded, policy-driven, and evidenced), and “inside out, not outside in” (protection starts from Operations-Critical services and critical data sets outward, rather than relying on a perimeter).

Disruptions increasingly originate or propagate through shared providers and integrations, making resilience an ecosystem property, not just an enterprise property. The ORF is designed so each participant follows common principles and rules—MVSLs, SDOs, protected critical data sets, tested recovery environments, and independent evaluation—that make the whole stronger and more resilient than the individual participants alone. Considering MVSLs across the ecosystem and conducting coordinated testing reduce the chance of systemic impact, make impaired-state operations predictable, and accelerate restoration for customers, partners, and counterparties when adverse events occur.

Finally, including important vendors and suppliers in the development and testing of resilient services is essential to the safety and soundness of our economy. It is not practical for each enterprise to test resilience one-to-one with every important supplier. These multi-sector exercises that span an ecosystem should be convened by an independent third party (e.g., BRC, ISACs, CSA) to ensure realism, neutrality, and broad participation.

Role of the Business Impact Analysis (BIA)

“Aligning the tone at the top with the resources in the ranks.”- Phil Venables

The Business Impact Analysis (BIA) is directly aligned with multiple Zero Trust guiding principles forming the basis of all resilience activities:

- Begin with the End in Mind (business/mission objectives)

- Inside Out, Not Outside In

- Breaches Happen

- Understand your Risk Appetite

- Ensure the Tone from the Top

The BIA also forms the basis for continuous improvement, supporting the Zero Trust guiding principle of “Continuously Monitor.”

The BIA assists the organization with the planning and implementation of Zero Trust principles. At its core, the relationship between resilience and Zero Trust is largely about Identity and Access Management (IAM) and managing the blast radius. The BIA is an accepted means of establishing priorities and determining dependencies by linking business strategy, security architecture, and operations. It begins with corporate policy and obligations, effectively establishing requirements and providing the basis for resilience testing to ensure objectives are met.

It is not unusual for larger organizations to develop a master BIA for the entire enterprise and then to have separate BIAs for key processes and parts of the organization. The latter is often referred to as an Activity BIA. This is done to handle complexity and to make the results more actionable. Some organizations have chosen to keep the resilience aspects as a separate document or an appendix to augment the BIA. For the purpose of this paper, we treat it as a single document.

Useful resources for learning more about developing BIAs include:

- The International Standards Organization (ISO)) has the most mature BIA references of the global standards. ISO 22317 (BIA) builds upon ISO 22300 (Security and Resilience) and ISO 22301 (Business Continuity Management)

- NIST SP 800-34 defines a BIA as, “Process of analyzing operational functions and the effect that a disruption might have on them”

- NIST IR 8286D, entitled “Using Business Impact Analysis to Inform Risk Prioritization and Response,” provides instructions on the development and use of a BIA

- The Business Resilience Council (BRC) under the Global Resilience Federation (GRF) has useful resources, as well

Through the lens of resilience and Zero Trust, the BIA is re-envisioned in key ways:

- Heavily influenced by external events (e.g., loss of a Cloud Service Provider (CSP))

- Addresses impact on the external ecosystem by internal events (e.g., ransomware)

- Driven by business priorities, business value of assets, and external obligations

- Heavy focus on dependencies between components that cooperate to deliver a business process, products, and services

- Addresses all three aspects of the confidentiality, integrity, availability (CIA) triad for each element

- Regards “acceptable” as a reduced level for a period of time until fully restored

It is important to remember when looking at impact that it is about business value, not technical severity. Using the BIA to establish priorities and to determine dependencies enables us to effectively allocate resources (e.g., capital, people, time, energy) to internal controls, including Incident Response (IR) and the protect, detect, and recover functions. These allocations can be made for global hazards without having to know every possible source of the hazard.

The BIA empowers us to align the business, security architecture, and operations.

Acceptable Levels

In the traditional worlds of Business Continuity (BC) and Disaster Recovery (DR), we rely primarily on two metrics: Recovery Point Objective (RPO) and Recovery Time Objective (RTO). RPO is the maximum allowable loss of data. RTO is the maximum acceptable downtime before it causes unacceptable business harm. Both are a measure of tolerance and drive practices throughout the enterprise (e.g., backup schedules).

In resilience, as opposed to BC/DR, we presume incidents and we recognize the advantages of operating in an impaired state while moving towards full restoration.

For each line item in the BIA, define the following:

-

Service Delivery Objectives (SDO): Defined by the ORF as, “The objectives that set the impaired level and time constraints for delivery of services in the event of a disruption”

-

Maximum Tolerable Loss: The maximum tolerable loss is typically measured in data (value, sensitivity, volume), value loss (e.g., revenue), or length of disruption

-

Minimum Viable Service Level (MVSL): Defined by the ORF as the lowest possible level of service delivery to enable customers, partners, and counterparties to deliver their critical services to their downstream customers, partners, and counterparties. The PCAST adopted the MVSL as defined by the ORF

-

The term is related to Impact Tolerance, defined by the Bank of England in Supervisory Statement SS1/21 as, “The maximum tolerable level of disruption to an important business service as measured by a length of time in addition to any other relevant metrics.” The MVSL is intended to meet the level of Impact Tolerance of customers, business partners, and counterparties. If there is a greater impact, or the service cannot meet the identified MVSL, then consumer harm and systemic impacts may occur

-

For example, “No more than 50,000 people will be without x (e.g., water, food, electricity, communications) for more than 1 week.” Example courtesy of Report to the President Strategy for Cyber-Physical Resilience: Fortifying Our Critical Infrastructure for a Digital World (page 27)

-

Organizations typically find it useful to set acceptable levels in the following order, with the integrity leg of the CIA triad gaining in priority with the increased adoption of Artificial Intelligence (AI):

- Availability (service delivery)

- Confidentiality (data loss)

- Integrity (data corruption)

These metrics are explored further in the Metrics and Indicators section.

Once Priorities Are Determined

After we have a stack ranking by management of what is meant to remain viable, we need to understand the relationships and dependencies by identifying the technology, the people, the process, and organizational dimensions that support each activity. Without knowing the underlying components, we cannot recover.

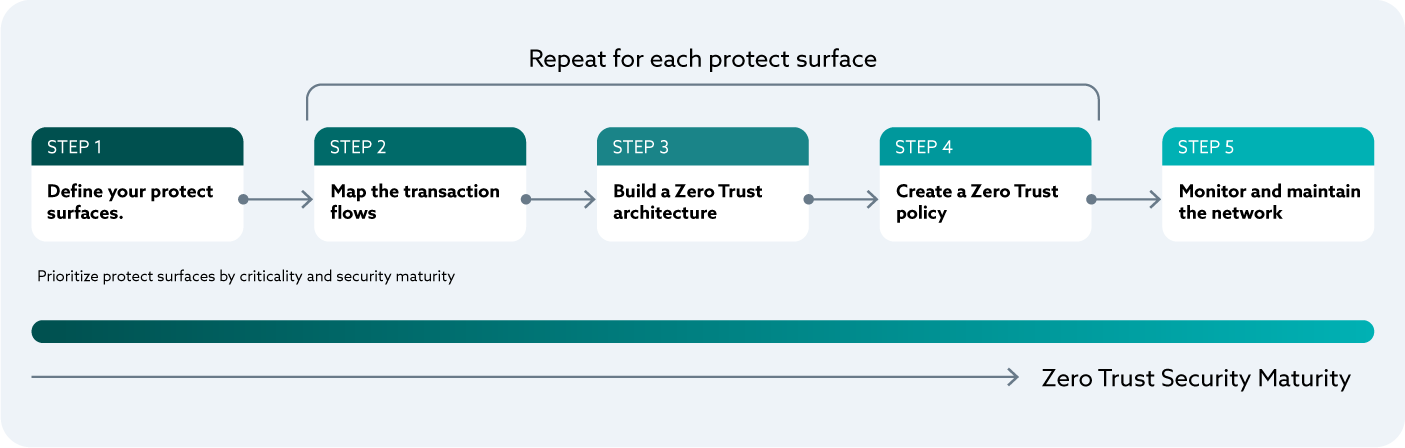

This directly maps to Zero Trust Step 1 of the five step process.

During this step, we also identify the sequence items that are restored. We cannot recover everything at once. Certain components are dependent on other items to be restored first.

The BIA helps establish an initial assessment of existing and desired levels of resilience.

Where to Find Obligations

While looking inside the four walls of the organization, we ask ourselves several questions, remembering the desired outcome is to stack rank technology, people, and processes by importance to the business.

- What is required to continue delivering our product and services?

- What is the minimal acceptable level we can deliver products and services to remain viable?

- What are the minimum viable levels for non-customer facing operations (e.g., payroll) to remain viable?

- How long can we go without each of these functions to remain viable?

Understanding single points of failure (SPOFs) is important. For each third party, ask yourself, “Can we operate without them?” and “How do we replace their role in the event of an incident?”

Be sure to look for where you are aggregating risk. This is a challenging task. By definition, a SPOF is a form of risk aggregation. Outside of SPOFs, we are looking for third parties that have large amounts of our data; are integrated in multiple business units, products, and services; or are engaged across our supply chain and industry. Why? As we continue to increase the business value held by a vendor, they become a larger target for malicious actors. As third parties grow in importance or the more connections they have, the greater the value to a malicious actor, thereby justifying greater commitment by the malicious actor and a greater risk to your organization.

Third Party Risk Management (TPRM)

In essence, we are looking at different forms of counter party risk and risk treatments. In today’s highly interconnected world, we cannot restrict our view to within our own four walls. The modern enterprise is highly dependent on external third parties including Cloud Service Providers (CSPs), Managed Service Providers (MSPs), and Managed Security Service Providers (MSSPs), all commonly referred to as External Service Providers (ESPs). Disrupting an ESP disrupts everyone with which they are connected.

The modern enterprise is almost always reliant on some form of supply chain. These can be highly complex, global supply chains like we see in industrial sectors like manufacturing, pharmaceutical, and aerospace. There are also simpler situations where back-office operations like payroll are outsourced and vendors are relied on for day-to-day maintenance, repair, and operations (MRO) ranging from office supplies to equipment. It is hard to find an organization that is not reliant on a software supply chain or a hardware supply chain.

The global nature of the business requires us to face the fact that an event on the other side of the world can ripple through, eventually impacting us. This is often referred to as contagion.

Interested Parties

Interested parties are the people and organizations to whom we are obligated. Interested parties fall into three categories:

- Our business’s internal obligations

- Upstream partners and customers to whom we supply products and services

- Downstream ESPs, partners suppliers, and vendors that we rely on

Obligations

The obligations help us understand minimal requirements to remain viable. Obligations come from several sources:

- Legislation

- Regulation

- Customers (including any communities served)

- Partners

- Corporate policies

- Operational needs

Our operational needs are often the easiest to forget. These are the operational items that are required to operate at acceptable levels. They often cut across large swatches of the organization. For example, identity and access control. If you restore an application or a business process but nobody can access the required application, you remain offline. If you do not have state information, you are not viable.

Each leg of the CIA triad is to be looked at separately. The confidentiality requirements are different from the availability requirements. Historically, the integrity leg is most important for devices, fraud, and effective operations. With AI, the integrity leg is growing in importance.

Introducing Abuse Use Cases to the BIA to Assess Adversarial Value

Traditionally, organizations prioritize cybersecurity investment on assets of most value to the organization. This approach conflicts with the typical attacker viewpoint, which is often focused on gaining access, sustaining that access, selling the access, or seeking out opportunities for extortion, theft, or fraud. By considering asset criticality both from the value to the organization and the value to an attacker, organizations can better prioritize investment to ultimately reduce the magnitude of impact from successful cyberattacks.

High Value Targets (HVTs) are information systems, data, roles, and processes for which unauthorized access, use, disclosure, disruption, modification, or destruction could cause a significant impact to an organization’s ability to perform its mission or conduct business. These systems may contain sensitive controls, configurations, instructions, or data that is then leveraged for critical information systems’ management. They may house unique collections of secrets, as well as be systems that perform defensive operations (such as delivering protect, detect, investigate, and respond capabilities). This methodology may be an extension of the “recognizability” factor described as the likelihood that potential adversaries would recognize that an asset is critical. HVTs can as well be referred to as transversal technology supporting important business services (or an organization’s crown jewels), which need to be identified and properly secured to better handle the environmental changes caused by an advanced adversary.

High Value Target Methodology

NIST Interagency Report (IR) 8286D reinforces this view by highlighting the importance of incorporating abuse case identification into risk assessments. Beyond traditional BIAs that often focus primarily on loss of availability, IR 8286D explicitly calls for also analyzing confidentiality and integrity abuse scenarios—understanding how attackers might exploit or manipulate data and systems—to ensure that adversarial value is fully captured in criticality assessments.

The Criticality, Accessibility, Recoverability, Vulnerability, Effect, and Recognizability (CARVER) target analysis and vulnerability assessment methodology is a way of completing the analysis.

Metrics and Indicators

“You can’t control what you can’t measure.” - Tom DeMarco

Metrics are objective measurements. Indicators are used to attract your attention for further investigation. Often it is not the metric that is important. Rather, it is the trend over time that is the most useful.

Think of your car’s dashboard. The check engine light is the indicator. Your mechanic running diagnostics provides the metrics to be used to uncover the root cause and remediate.

Indicators come in three types:

- Leading Indicators: These are the hardest to define and the most valuable. Leading indicators tell you something is likely to happen. The best leading indicators provide a sense of when and the impact

- Coincident Indicators: These tell you something is happening

- Lagging Indicators: These tell you something happened

The resilience community originally defined a new metric called the Maximum Tolerable Period of Disruption (MTPD) as the maximum time a business can experience a disruption before it faces unacceptable consequences like financial loss, reputational damage, or regulatory non-compliance. MTPD is effectively a version of RPO and RTO focused on a particular business process or function.

MTPD is also known as Maximum Acceptable Outage (MAO) or Maximum Tolerable Downtime (MTD). In practice, the Minimum Viable Service Level (MVSL) we used to develop the BIA is the most useful because it can be associated with individual business processes, products, and services.

The President’s Council of Advisors on Science and Technology (PCAST) report, “Strategy for Cyber-Physical Resilience Fortifying our Critical Infrastructure for a Digital World,” made the following recommendation to the U.S. President. Subsequently, this recommendation and three others were adopted and included in the U.S. National Strategies while under consideration by other countries and bodies. The recommendations are aligned with the principles contained in the ORF, and the ORF is one of the inputs to the report.

-

Recommendation 1: Establish Performance Goals. Set minimum delivery objectives for critical services, even in the face of adversity, and establish more ambitious performance goals to measure all organizations ability to achieve and sustain those objectives

- Recommendation 1A: Define Sector Minimum Viable Operating Capabilities and Minimum Viable Delivery Objectives

- Recommendation 1B: Establish and Measure Leading Indicators

- Recommendation 1C: Commit to Radical Transparency and Stress Testing. Executive Metrics are objective, measurable values used to assess progress towards reaching goals. Metrics help identify waste and inefficiencies, set realistic goals, compare performance to desired outcomes, and provide data to determine if adjustments are needed to achieve the desired outcomes

Example Minimum Viable Operating Objectives and Minimum Viable Delivery Objectives

-

Bounded Impact: Expresses the minimum delivery goals and is directly aligned with the Zero Trust principle of limiting the blast radius. For example, no more than 50,000 people will be without x (e.g., water, food, electricity, communications) for more than 1 week

-

Bounded Failure: A measure of the maximal impact of any single failure via containment of spread by creating independence and resilience of subsystems and components to failures of other components. Bounded Failure is also directly aligned with the underlying principle of limiting the blast radius emphasizing the drive to eliminate (or minimize) single points of failure (SPOF) and avoid the risk of cascading failures (cascading risk). You can see how segmentation and micro-segmentation can be used to prevent (or limit) an event causing additional events across the enterprise or outside of the enterprise

Having business partners who practice Bounded Impact and Bounded Failure help ensure events experienced by them avoid or at least limit damage to others in the ecosystem, avoiding contagion.

Leading Indicators

The intent of these metrics is to identify an organization’s most critical systems. Specific metrics would be created in the context of each sector and organization.

PCAST lists the following:

- Hard Restart Recovery Time

- Cyber-Physical Modularity

- Internet Denial/Communications Failure

- Fail-Over to Manual Operations

- Control Pressure Index

- Software Reproducibility

- Preventive Maintenance Vibrancy

- Inventory Completeness

- Stress Testing Vibrancy (red teaming)

- Common Mode Failures and Dependencies

Useful Metrics to Track

Organizations find it useful to track Single Points of Failure (SPOF), where risk is being aggregated, and sources of cascading risk (aka, cascading failure). These are useful to track while executing your program and during day-to-day operations. While remediating, it is recommended to track metrics like percentage of SPOFs remediated, variance of aggregated risk to risk tolerance, and percentage of cascading risk mitigated.

During day-to-day operations, it is useful to instrument, monitor, and track unremediated SPOFs. It is not unusual to have known risks, tracked in your risk register, that are not remediated for one reason or another (e.g., accepted, not feasible).

Additional useful metrics for consideration:

- Board: What threshold of what metric if exceeded (or not adhered to) demands immediate escalation to the Board? What is the most extreme but plausible scenario that we feel like we cannot withstand? What percentage of your infrastructure (on-premise or cloud) is software defined, follows an immutable infrastructure pattern and for which the configuration code is reproducible?

- Time to Reboot the Company: Imagine everything you have is wiped by a destructive attack or other cause. All you have is bare metal in your own data centers or empty cloud instances and a bunch of immutable back-ups (tape, optical, or other immutable storage). Then ask, how long does it take to rehydrate/rebuild your environment? In other words, how long does it take to reboot the company?

- Blast Radius Index: What percentage of roles in your organizations have a potential incident (insider risk or error driven) damage blast radius greater than the organization span of the role N steps (e.g., N=2) above that in the organization? For specific lines of attack (e.g., application compromise, e-mail delivered malware, web drive-by-downloads), what is the average level in the defense-in-depth stack that stops the attack and at what point is the attack detected?

- Reproducibility: What percentage of your entire software is reproducible through a CI/CD pipeline? If this percentage is low, then inevitably the time to resolve vulnerabilities or completeness of resolution will not be what you want

- OODA Spread: How much faster (or slower) is your OODA loop than your attacker’s? Responsiveness and adaptiveness in the face of an attacker’s capabilities and intent is a key signal of how likely you are to be subject to a successful attack

As well, the Cyber Resilience Index metrics measure an organization’s ability to anticipate, resist, adapt, and recover from cyber threats by assessing both preventive controls (governance, detection, protection) and response/recovery capabilities.

Technical Debt and Operational Technology (OT) Equipment Not Built for a Digital World

The measure of technical debt and OT equipment not built for a digital world is a leading indicator.

Technical debt is a term of art referring to the long-term costs of using suboptimal or outdated systems, like old servers, software dependencies, or poor security practices, instead of more robust, modern solutions. It can also refer to overly complex situations. Whatever form it takes in your enterprise, technical debt causes complexity, increases costs and consumes resources, creating risk to your enterprise. Organizations with a high level of technical debt often suffer from an inability to successfully implement both Zero Trust and resilience. A high level of technical support is often required to keep these suboptimal systems running, taking away resources from technical improvement.

OT equipment, like ICS, was often built to control physical processes not to manage digital information like IT. OT systems were initially designed to be isolated, reliable, and focused on physical operations, with security and connectivity as afterthoughts. While the core principles apply to OT, the tools and techniques are implemented quite differently. These fundamental differences create challenges as organizations demand IT/OT convergence. Incidents like Colonial Pipeline have highlighted how cyber incidents in IT systems can quickly impact the physical world.

Technical debt and OT equipment not built for a digital world can be the result of many things like independent growth, rapid growth, and merger and acquisition (M\&A) transactions. Whatever the cause, the result is increased likelihood of an incident, greater impact, slower detection, and prolonged resolution.

The tracking and measurement of technical debt and OT not built for a digital world are a leading indicator. While a universal measurement does not exist, some tools do exist. The standard for understanding IT software defined by the Consortium for Information & Software Quality (CISQ) is an example.

Role of the Supply Chain

The supply chain touches upon several Zero Trust guiding principles:

- Access is a Deliberate Act

- Breaches Happen

- Understand Your Risk Appetite

- Ensure the Tone from the Top

- Instill a Zero Trust Culture

- Continuously Monitor

The BIA addresses the significance of different members of the supply chain, recovery priorities, and the order of recovery.

The Age of Interconnection

In today’s global, digital business landscape, our organizations do not exist in isolation; they are nodes within sprawling, interconnected networks of suppliers, customers, logistics, technology providers, and regulatory bodies. An ecosystem that arguably guarantees both mutual destruction and mutual benefit! Events and disruptions in distant organizations can rapidly cascade through networks, upending operations, finances, and reputations.

- In 2025, fewer than 8% of organizations feel they have full control over their supply chain risks, while 63% report supply chain losses are above expectations

- Supply chain disruptions increased by 30% in the first half of 2024 compared to the previous year

- The interconnectedness means risk contagion; what happens to a single node, entity or partner can quickly propagate, affecting upstream and downstream suppliers, customers, and the broader ecosystem

How Other Organizations Impact Us and Vice Versa

-

Outbound Risk Amplification: If a critical supplier fails, outages, missed deliveries, or data breaches can quickly impact our ability to serve customers. Likewise, disruptions in our organization, cyber breaches, financial distress, compliance violations—not only affect us, but also propagate upstream to our partners and customers

-

Counterparty Risk and Contagion: Counterparty risk refers to the possibility that the entities we rely on (e.g., suppliers, vendors, customers) may themselves default, introduce systemic risk, or experience shocks that spread through the network. The concept of contagion in supply chains means incidents are not contained; a disruption, cyberattack, or compliance failure at one point can quickly travel across partners, even to entities with robust internal controls

Regulatory and Legislative Pressures

In recent years, we have seen a steady shift in global legislative and regulatory focus on supply chain risk. The EU’s Digital Operational Resilience Act (DORA), NIS2, and UK’s PRA requirements enforce strict third-party management and supply chain security standards for financial firms and critical infrastructure.

What is UK PRA?

The Prudential Regulation Authority (PRA) is a detailed framework for UK financial firms promoting safety and responsible risk management across the banking and insurance sectors. This ensures that third-party vendors and suppliers working with PRA-regulated firms, especially in the technology and cyber domain, face more direct regulatory scrutiny and a need for demonstrable security resilience and transparency.

Impact on Third-Party Vendors/Suppliers

From January 2025, the new Critical Third Parties (CTP) Regime gives UK regulators direct oversight of suppliers whose services are considered systemically important to the financial sector. Designated CTPs must comply with stricter requirements covering risk assessment, resilience measures, and supply chain oversight.

All significant third-party arrangements, including those not technically “outsourcing,” are subject to robust risk management expectations, including cybersecurity controls, business continuity, and incident response plans. Material non-outsourcing vendors face requirements similar to critical outsourcers, proportional to the risk level.

Financial firms must ensure that third-party contracts include clear security and compliance clauses, audit and access rights, and notification duties for any incidents affecting services.

All information and communication technology (ICT) suppliers must meet UK and global standards for cybersecurity (e.g., Cyber Essentials Plus), GDPR/data protection, and ongoing operational resilience.

Firms are required to notify the PRA about material issues with vendors, such as if a supplier can’t meet security contract terms or if the arrangement poses unique risks.

The overall aim is to reduce systemic risk from supplier failures, particularly due to cyberattacks or technology disruptions, and to ensure that both firms and regulators can monitor and intervene in supplier relationships as needed.

Seventy-five percent of supply chain leaders in 2025 noted board-level engagement and regulatory compliance as top priorities, citing a dramatic increase from previous years. Standards such as ISO 28000 (Supply Chain Security Management system (SCSMS)) and the NIST Cybersecurity Framework now explicitly define supply chain risk management as core requirements.

Legislation is now driving organizations to:

- Map out their supply chains, including subcontractors, fourth parties, and software dependencies

- Monitor for single points of failure (SPOFs) and aggregate risk exposure

- Conduct regular supplier audits, scenario testing, and resilience planning

- Demonstrate proactive risk management and incident reporting

Statistics and Metrics: The Risky Reality

According to a recent survey of 546 IT directors and CISOs by cybersecurity ratings vendor SecurityScorecard

- Seventy-one percent of organizations experienced at least one material third-party cybersecurity incident in the past year, and 5% reported ten or more such incidents,

- Supply chain attacks surged by 431% between 2021 and 2023, with projections for further dramatic increases

- Forty-five percent of organizations globally are expected to experience attacks on software supply chains by 2025—a threefold increase from 2021 levels

- The average cost of a cyber-related supply chain data breach reached $4.88 million in 2024, a 10% increase year over year

- The percentage of businesses that cite cybersecurity as their primary concern in ensuring supply chain resilience is 55.6%

- Sixty-two percent of leaders expect labor shortages to present ongoing short-term supply chain risk

- Nearly one-third of organizations now prioritize dual-sourcing and supplier diversification to mitigate disruption.

Other Important Metrics.

- Eight percent of organizations have full supply chain risk control

- Sixty-three percent report higher-than-expected losses

- Supply chain disruptions rose 30% in the first half of 2024

- Supply chain attacks are up 431% from 2021-2023

- $4.88 million average cost of supply chain cyber breach (2024)

- Cyber risk is the top supply chain concern for 55.6% of businesses

- Closer collaboration is the number one improvement strategy for 54% of leaders

Single Points of Failure (SPOF) and Risk Aggregation

A single weak link, whether it’s a supplier, logistics provider, or technology vendor, can act as a SPOF, threatening the entire value chain.

-

Digital transformation has intensified SPOF risks. A vulnerable API or software dependency can put multiple organizations at risk simultaneously

-

Aggregating risk occurs when too many dependencies, risks, or critical services are concentrated with one or a limited number of suppliers, regions, people, or technology, magnifying impact if a disruption occurs

-

In certain industries, customer failure can lead to a whole supply chain failing. Manufacturing industries, such as the motor trade, are an example of this. Many component factories are set up to supply large vehicle manufacturing plants. If the vehicle manufacturer has to stop production, the impacts cascade to the entire supply chain, many of which may be less resilient than the manufacturer themselves

Testing the Chain: Scenario Simulations and BIA Scenario Testing and Simulated Failure

- Effective supply chain risk management requires regular scenario testing that simulates supplier outages, cyberattacks, financial distress, and regulatory incidents

- Scenarios are designed to exercise items identified in the BIA

- These exercises reveal vulnerabilities, help calibrate response plans, and identify operational dependencies before real-world incidents occur

Business Impact Analysis (BIA)

- The BIA establishes priorities and criteria by mapping critical processes, dependencies, and recovery timelines